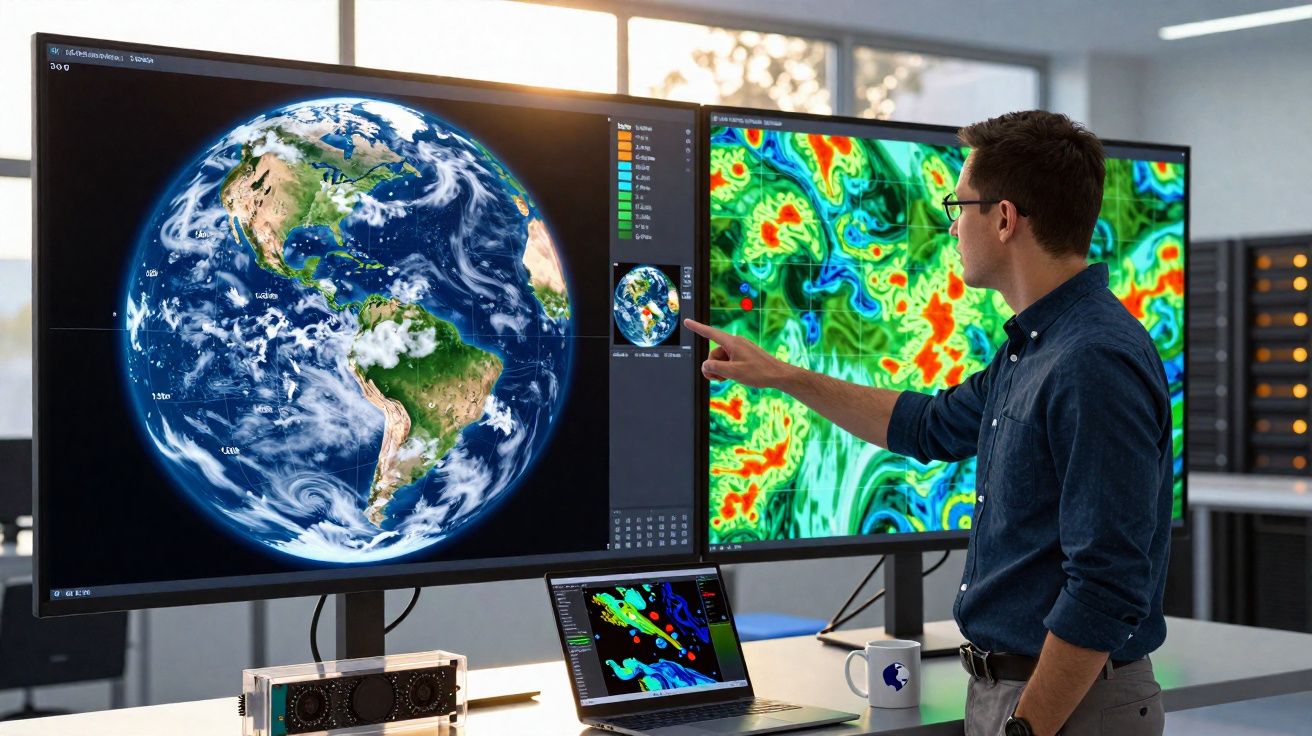

Weather prediction has a reputation for being a bit erratic - and climate modelling is more challenging still. Even so, our growing ability to anticipate what the natural world may deliver is largely down to two developments: improved models and ever-greater computing power.

A new paper from a team led by Daniel Klocke at the Max Planck Institute in Germany sets out what some climate-modelling researchers have called the "holy grail" of the discipline: a near-kilometre-scale system that unites weather forecasting with climate modelling.

Strictly speaking, the model’s grid is not exactly 1 km² per simulated patch - it operates at a resolution of 1.25 kilometres.

At that stage, the difference is almost beside the point. The Earth’s land and oceans require an estimated 336 million surface cells, and the authors added the same number of "atmospheric" cells directly above them. That brings the calculation to 672 million cells in total.

For every one of those cells, the researchers ran a linked set of models representing the planet’s main interacting dynamic systems. They grouped these into two broad classes: "fast" and "slow" processes.

Daniel Klocke and the kilometre-scale model: fast and slow processes

The "fast" category covers the energy and water cycles - essentially, the day-to-day mechanics of weather. To follow these clearly, a model needs very fine spatial detail, such as the 1.25 km resolution this new system can provide.

To handle that part of the simulation, the team used the ICOsahedral Nonhydrostatic (ICON) model, developed by the German Weather Service together with the Max Planck Institute for Meteorology.

Getting into the weeds of climate modelling helps make sense of the paper:

By contrast, "slow" processes include the carbon cycle along with shifts in the biosphere and ocean geochemistry. These play out over years or even decades, rather than over the few minutes it might take a thunderstorm to move from one 1.25 km cell into the next.

The key advance described in the paper is the coupling of these fast and slow processes - something the authors are, understandably, pleased to highlight. In many conventional approaches, models complex enough to include both sets of systems are only computationally manageable at resolutions coarser than 40 km.

So how did they manage it? The answer is a mixture of careful software engineering and a large amount of cutting-edge hardware. What follows leans into the computing side of the story, so if software and hardware engineering is not your thing, you may want to skip the next few paragraphs.

The underlying model used for much of this effort was originally written in Fortran - the bane of anyone who has ever tried to modernise code written before 1990.

Over time, it had also accumulated numerous additions that left it poorly suited to today’s computing architectures. The researchers therefore opted to use a framework called Data-Centric Parallel Programming (DaCe), designed to organise and move data in ways that better match modern systems.

Simon Clark tests whether a climate model can run on far simpler hardware - a Raspberry Pi:

That “modern system” consisted of JUPITER and Alps, two supercomputers based in Germany and Switzerland respectively, both built around Nvidia’s new GH200 Grace Hopper chip.

Within these chips, a GPU (the sort commonly used to train AI - here the Hopper) is paired with a CPU (in this case an ARM design, labelled Grace).

This split in computing roles and strengths enabled the team to run the "fast" components on the GPU to match their quicker update cadence, while the slower carbon-cycle calculations were carried out in parallel on the CPUs.

By dividing the workload in this way, the researchers were able to use 20,480 GH200 superchips to model 145.7 days in the space of a single day. Achieving that required close to 1 trillion "degrees of freedom" - meaning, in this setting, the total number of values the model had to compute. It is hardly surprising that this kind of simulation demands a supercomputer.

The downside is that simulations at this level of complexity are not going to appear at your local weather station any time soon.

Computing capacity on this scale is scarce, and large technology companies are more likely to spend it on extracting every last ounce of performance from generative AI, regardless of what that means for progress in climate modelling.

Even so, the authors’ ability to deliver such an impressive computational result merits recognition - and it leaves open the possibility that, one day, simulations of this type could become routine.

The research is available as a preprint on arXiv.

This article was originally published by Universe Today. Read the original article.

Comments

No comments yet. Be the first to comment!

Leave a Comment