The bell had just sounded at the end of the philosophy exam when the teacher came up with a curious notion. After months of hearing pupils whisper, “I’ll just get ChatGPT to write my essay,” the remark had started to wear thin. So that evening, alone in her sitting room with a red pen in her hand, she opened her laptop and typed the French baccalaureate essay question into ChatGPT.

Two clicks later, the AI produced a neat, well-ordered essay. No spelling errors, no crossings-out, no signs of hesitation. Exactly the sort of script that makes tired teachers fantasise at 1 a.m. in June.

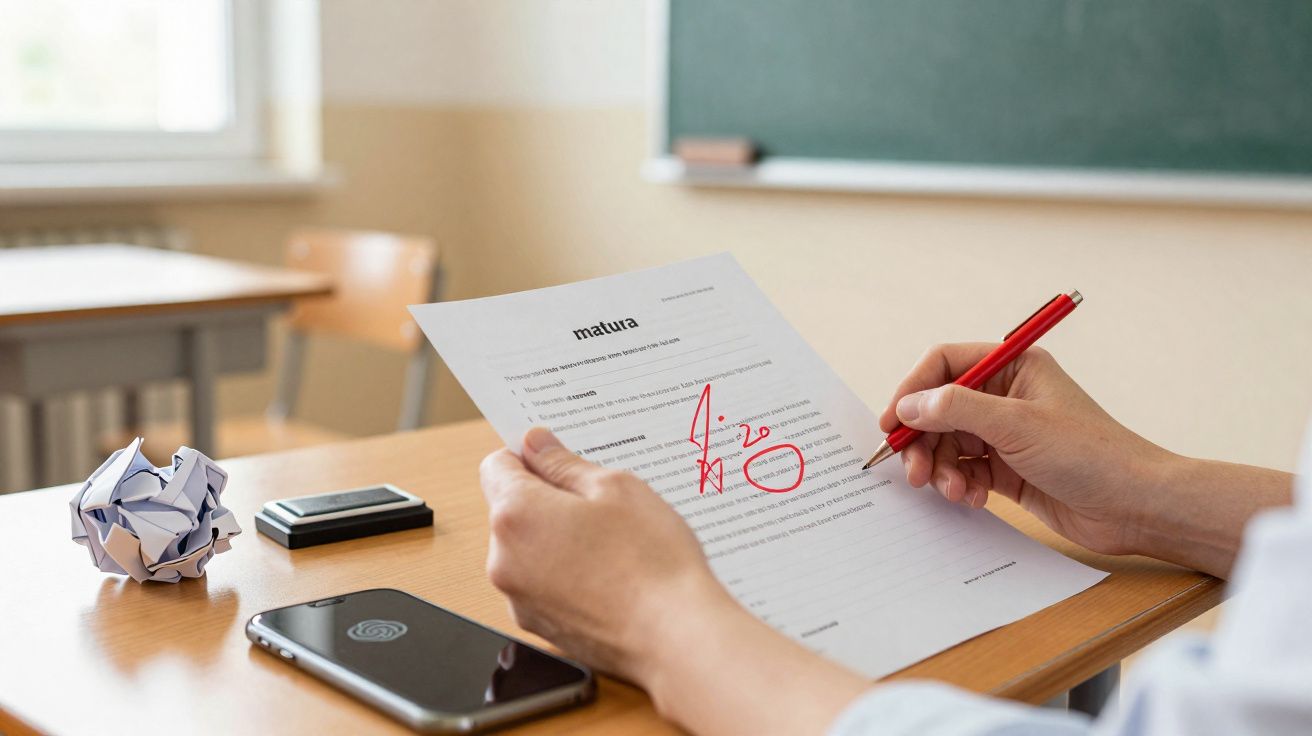

She printed it out, attached it to the official exam marking sheet, and settled down to mark it as though it were yet another anonymous paper.

Twenty minutes later, the judgement was uncompromising.

When ChatGPT enters the exam hall… without a chair

At first glance, the essay looked promising. The introduction was tidy, the central question was clear, and the transitions were respectable. On a quick scan, it was the kind of work that might reassure a flustered student sitting in the exam room. The teacher nodded, slightly impressed and slightly wary.

But as she read on, that favourable first impression quickly disappeared. The ideas stayed close to the subject without ever really grappling with it. The references felt generic, as though they had been lifted from the back pages of a school notebook. There was no individual voice. No real position.

She put her pen down and wrote a blunt 8/20 at the top of the page.

This took place in a genuine secondary school, with a genuine philosophy teacher who has corrected hundreds of scripts over the years. She used an official baccalaureate topic, along with the same marking grid and the same standards: understanding the question, organisation, subject knowledge, reasoning, and quality of expression.

On that grid, the AI’s essay fell apart in exactly the areas where teenagers usually struggle: depth and subtlety. The quotations felt vague, the examples were only half digested, and everything seemed a little too polished. A little too “model pupil” and not enough real thought.

The final mark out of 20 was not merely disappointing. It was a cold splash of water for anyone who believed AI could breeze through the bac on autopilot.

Once the initial surprise had passed, the teacher did what teachers do: she analysed. Why had this technologically impressive essay ended up with such an ordinary mark? She realised that the AI could imitate the shape of a strong essay, but not the intellectual risk. There was no bold thesis, no pointed rebuttal, no moment of genuine reflection.

The AI played it safe. It filled the page without truly engaging with the problem, like a pupil who revises the lesson but never fully understands it.

On the page, it ticked the right boxes - but it felt empty underneath.

ChatGPT and the French Baccalaureate: how to use AI without letting it do the cheating

The teacher did not stop there. Still a little shaken, she decided to show the AI essay to her class. She projected the text and asked a straightforward question: “What mark would you give this?” The students read in silence, some frowning, others visibly impressed.

At first, many guessed 12, 14 or even 15 out of 20. Then she began breaking the essay down with them, pointing out fuzzy ideas, pseudo-examples, and sentences that sounded profound without actually saying very much.

By the end, the whole class understood why the mark had dropped to 8/20. And they understood something else as well.

That “something else” was this: if you submit an essay written by ChatGPT without changing it, you are taking a serious gamble with your grade. Sometimes the result is acceptable, sometimes completely off topic, and often merely average. Rarely is it brilliant.

The teacher recommended a different approach. Use AI as a brainstorming partner, not as a ghostwriter. Ask it for ideas, opposing arguments, and lists of examples. Then close the tab. Take a pen. Rewrite everything in your own words, using your own judgement.

To be frank, hardly anyone does that every single day. But the students who try it do improve faster than the rest.

There is another point that matters just as much: AI is strongest when the user already has some subject knowledge. If you ask vague questions, you usually get vague answers. If, however, you bring a clear plan, a proper topic, and a sense of what you want to test, the tool can help you refine rather than replace your thinking. That is why the difference between revision support and cheating is not only about what you ask, but about what you do with the answer.

It also means that a pupil who learns to question the model - to challenge its examples, check its logic, and spot what it leaves out - develops a useful habit for exams and beyond. Critical reading remains a human skill, even when the text in front of you was generated by a machine.

She summed it up in one line during that lesson:

“ChatGPT can help you think, but it cannot think for you.”

So the students wrote on the board what they could realistically expect from AI during revision:

- Generate lists of concepts and definitions to revise more efficiently.

- Ask for counter-arguments to strengthen an existing plan.

- Turn a rough draft into a cleaner, more readable version.

- Request examples from literature, history, or current affairs to enrich an idea.

- Simulate exam questions to practise constructing a plan quickly.

Used in this way, the tool stops being a shortcut and becomes a training partner.

What that “brutal” 8/20 really says about us

This failed bac essay produced by ChatGPT tells us less about machines than about our own expectations. We long for a magic answer that would remove stress, hesitation, and the pages left blank through lack of inspiration. Yet the exam still rewards something deeply human: a point of view, sensitivity, and the ability to connect knowledge with lived experience.

Some readers will take this story as proof that AI is overhyped. Others will see it as another reason to panic about the future of schooling. Between those two reactions lies a quieter path: use the tool, but refuse to hand over your thinking.

The teacher who awarded that 8/20 still marks handwritten essays every year. But now, behind every well-structured script, she asks herself one extra discreet question: “Whose voice am I actually reading?”

Why teachers are still looking for more than polished paragraphs

An exam answer can look very competent and still miss the point. Teachers are not only assessing whether a pupil can organise three neat sections and use elegant transitions. They are also looking for judgement, precision, and the courage to take an argument somewhere specific.

That is why an essay that sounds smooth but stays vague can score poorly, even if it is technically well written. In philosophy, and in many other subjects too, style matters - but it is never enough on its own.

| Key point | Detail | Value for the reader |

|---|---|---|

| AI does not yet excel at bac essays | The marked essay received 8/20 using an official grading grid | Reduces blind faith in the idea that “AI will do everything for me” |

| Form is not enough | Neat structure, weak depth, generic examples | Shows what teachers truly want: genuine thought |

| Best use = co-pilot, not pilot | AI for ideas and structure, the pupil for content and nuance | A practical way to use ChatGPT without undermining learning |

FAQ

Can ChatGPT really write a full bac essay?

Yes. It can produce a structured, grammatically correct essay with an introduction, development and conclusion that seem credible at first glance.So why did the teacher only give 8/20?

Because the essay lacked depth, accurate references, clear analysis and a real position - all of which matter greatly in bac marking.Is using ChatGPT for homework cheating?

Using it to generate a full essay that you hand in unchanged clearly edges into cheating. Using it to generate ideas that you then reshape yourself is much closer to a study aid.Can AI help me improve my writing?

Yes, if you ask it to critique your own text, suggest rewrites, or offer alternative ways of phrasing ideas that you then adapt rather than copy.Will AI eventually reach 18/20 in the bac?

Nobody knows. But if examiners keep shifting towards originality, personal voice and the quality of reasoning, the bar will keep moving.

Comments

No comments yet. Be the first to comment!

Leave a Comment